The other day I tried out a Z80 emulator Z80 Pack, and had the idea to make some software for the Z80, which I’ve never done.

In the 80s I only ever programmed the 6502 CPU a little, and the 8088 a bit more. I was always interested in the Z80 but never had access to one capable of playing games – my ZX81 doesn’t count. Back then, if you wanted a decent arcade style game you wrote it in assembly language.

If I wanted to make good games for the Z80 I’d need to use machine code. Could I get an LLM to help me get started? Turned out the answer, to my surprise, was yes. It produced a working “Hello world” program, and a couple more complex ones. I still want to try making a game to run on a Z80 ZX Spectrum emulator or something similar sometime, but trying this out gave me another idea.

Watching how well Claude Code generated the Z80 code, I thought of something slightly more practical: Could Claude Code + Opus 4.6 directly write highly optimized assembly for my current CPU? There are lots of capabilities modern CPUs have that aren’t fully used by most compilers. You can build for specific architectures but that requires a fair amount of research – something the coding model might help me with.

This led me to a kind of radical idea: What if you simply replaced your compiler with a coding agent + LLM? You input English, and get assembly code out. The agent can even run the linker for you, to give you an executable right away! Can that actually work?

I tried two approaches: One for performance, and one for portability.

Intel Optimized NASM for Performance

My test problem is executing a “magic square” search algorithm. A “magic square” is a matrix where all the columns, rows and diagonals add up to the same number (the entries are all positive integers from a sequence without gaps.) Every entry in the matrix is unique. Numbers can be organized in any order that allows for the addition rule to hold. Every different arrangement is another magic square.

Finding all the 4 by 4 canonical squares (ignore rotations and inversions) took a few minutes on a typical computer from twenty years ago. It was solved by hand in the 1600s. Finding all the 5 x 5 squares may take a few hours to a few days on 21st Century computers. All canonical 5 by 5 squares were first discovered in the early 1970s. All 6 by 6 canonical magic squares were only discovered in 2024.

Since the magic square algorithm involves addition of vectors and matrix operations it could be amenable to improvement with SIMD (Single Instruction, Multiple Data) special instructions among other advanced CPU features. Good references are out there, it’s just tedious to figure out exactly how to take full advantage of them. Claude Code is pretty good at it though.

After getting a C magic square finder program working with SSE and special bitmask optimizations (it didn’t end up profiting from SIMD AVX instructions) and a good algorithm, I had Claude Code + Opus 4.6 write an assembly version to be assembled with NASM, the most popular macro assembler.

My first attempt to generate NASM totally worked in that it made a functional program, but the resulting executable ran 40% slower than the optimized C with gcc’s -O3 level – not bad, but I thought Claude had missed some tricks used by the C program, so I asked it to read the C code and update the NASM output with the same or better algorithms and optimizations. The LLM NASM ran faster than the optimized C. Here are the details.

● Now let's build and test for correctness.

● Bash(make clean && make 2>&1)

⎿ rm -f magic magic_asm *.o

gcc -O3 -march=native -mtune=native -flto -fopenmp -ffast-math -funroll-loops -Wall -Wextra -o magic magic.c

nasm -f elf64 -g -o magic_asm.o magic_asm.asm

gcc -no-pie -o magic_asm magic_asm.o

● Assembles clean. Now verify correctness — both unique and --all counts must match.

● Bash(echo "=== ASM unique ===" && ./magic_asm 4 && echo "=== ASM all ===" && ./magic_asm 4 --all)

⎿ === ASM unique ===

Magic Squares Finder (NASM Assembly - BMI Optimized) ====================================================

… +21 lines (ctrl+o to expand)

● 880 unique, 7040 all — matches the C version exactly. Now benchmark.

● Bash(echo "=== C (1 thread) x100 ===" && time for i in $(seq 1 100); do ./magic 4 1 > /dev/null; done && echo "=== ASM

x100 ===" && time for i in $(seq 1 100); do ./…)

⎿ === C (1 thread) x100 ===

=== ASM x100 ===

… +7 lines (ctrl+o to expand)

● The optimized ASM is now faster than the C version.

┌───────────────────┬─────────────────┬─────────┐

│ Version │ 100 runs (real) │ Per run │ ├───────────────────┼─────────────────┼─────────┤

│ Original ASM │ 2.69s │ 26.9ms │ ├───────────────────┼─────────────────┼─────────┤

│ C (-O3, 1 thread) │ 0.94s │ 9.4ms │

├───────────────────┼─────────────────┼─────────┤

│ Optimized ASM │ 0.68s │ 6.8ms │

└───────────────────┴─────────────────┴─────────┘

That's a ~4x speedup over the original ASM and ~1.4x faster than C -O3.

Here's a summary of what changed:

1. BMI1 bit iteration (TZCNT/BLSR) — inner loops (e, i, f, g, k) now skip unavailable values entirely instead of

iterating 1-16 and checking each

2. Incremental bitmask — BT/BTS for O(1) uniqueness checks replacing growing chains of cmp/je (the old g check was 6

sequential compares, h was 7)

3. Precomputed sums — a+f, c+g, d+h, i+j, m+n hoisted before the k loop, and min_p replaces a test+branch with a

single cmp

4. Canonical flag in register — r12d loaded once, avoiding repeated global memory loads

5. C-version fill order — e, i together (column 0 → m computed), then f, g (anti-diag → j computed, column 1 → n

computed, row 1 → h computed), maximizing constraint propagation before the innermost k loop

6. Eliminated grid BSS writes — only the first solution writes to sample; subsequent finds just increment the counter

7. Temp bitmask in k loop — k, p, l, o uniqueness checked via BT against a temporary mask instead of pairwise

comparisons

It’s incredible to me that the LLM can make such a high performance solution with so little effort on my part. For certain scientific computing applications it may be profitable to generate compute heavy routines directly in assembly using an LLM, not something I would have expected to be feasible.

On a compute cluster where the architecture is identical on all nodes you can maximize the use of intrinsics. For instance if you know all your CPUs supported AVX-512 you could make a version of your library to take full advantage. Without a capable coding agent this sort of specialization would probably be prohibitibely time consuming for non-specialist developers.

I haven’t even tried out any GPU programming. I suspect it would be very helpful to first learn it decently well yourself.

If you’re curious, here’s the assembly code for the 4x4 magic square finder.

--- click to view the source ---

; Magic Squares Finder - Optimized NASM x86-64

; 4x4 magic squares with BMI1 bit iteration

;

; Optimizations over original:

; 1. BMI1 (TZCNT/BLSR) for inner loop iteration - skip unavailable values

; 2. Incremental bitmask with BT/BTS - O(1) uniqueness checks

; 3. Precomputed partial sums hoisted out of innermost loop

; 4. Canonical flag loaded once into register

; 5. C-version fill order for better constraint propagation

; 6. SSE2 for sample copy

; 7. Skip grid store on non-first solutions

;

; Fill order: a,b,c -> d(row0) -> e,i(BMI1) -> m(col0) -> f,g(BMI1) ->

; j(anti-diag),n(col1),h(row1) -> k(BMI1) -> p,l,o(computed)

;

; Requires: BMI1 (Haswell+), SSE2

bits 64

default rel

section .note.GNU-stack noalloc noexec nowrite progbits

extern printf

extern atoi

extern exit

section .data

banner: db "Magic Squares Finder (NASM Assembly - BMI Optimized)", 10

db "====================================================", 10, 0

usage_fmt: db "Usage: %s <size> [--all]", 10, 0

search_fmt: db "Searching for %s 4x4 magic squares...", 10, 0

unique_str: db "unique", 0

all_str: db "all", 0

result_fmt: db 10, "Found: %lu magic squares", 10, 0

sample_fmt: db 10, "Sample:", 10, 0

row_fmt: db " %3d %3d %3d %3d", 10, 0

section .bss

sample: resd 16

total: resq 1

has_sample: resq 1

canonical: resq 1

section .text

global main

%define MAGIC 34

%define ALL_BITS 0x1FFFE ; bits 1-16 set

main:

push rbp

mov rbp, rsp

push r12

push r13

push r14

push r15

push rbx

sub rsp, 8

mov r14, rdi

mov r15, rsi

lea rdi, [banner]

xor eax, eax

call printf wrt ..plt

cmp r14, 2

jl .usage

mov rdi, [r15 + 8]

call atoi wrt ..plt

cmp eax, 4

jne .usage

mov qword [canonical], 1

cmp r14, 3

jl .run

mov rdi, [r15 + 16]

cmp byte [rdi], '-'

jne .run

mov qword [canonical], 0

.run:

lea rdi, [search_fmt]

cmp qword [canonical], 0

lea rsi, [all_str]

je .print_mode

lea rsi, [unique_str]

.print_mode:

xor eax, eax

call printf wrt ..plt

mov qword [total], 0

mov qword [has_sample], 0

call search

lea rdi, [result_fmt]

mov rsi, [total]

xor eax, eax

call printf wrt ..plt

cmp qword [has_sample], 0

je .exit

lea rdi, [sample_fmt]

xor eax, eax

call printf wrt ..plt

mov r12, 4

lea r13, [sample]

.print_row:

lea rdi, [row_fmt]

mov esi, [r13]

mov edx, [r13+4]

mov ecx, [r13+8]

mov r8d, [r13+12]

xor eax, eax

call printf wrt ..plt

add r13, 16

dec r12

jnz .print_row

.exit:

xor eax, eax

add rsp, 8

pop rbx

pop r15

pop r14

pop r13

pop r12

pop rbp

ret

.usage:

lea rdi, [usage_fmt]

mov rsi, [r15]

xor eax, eax

call printf wrt ..plt

mov edi, 1

call exit wrt ..plt

; ============================================================================

; search - Find all 4x4 magic squares

;

; Register usage in hot path:

; ebx = used values bitmask (bits 1-16), maintained incrementally

; r12d = canonical flag (loaded once, constant during search)

; r14d = min_p (canonical ? a+1 : 1), precomputed before k loop

; r8d = a+f (precomputed for k loop)

; r9d = c+g (precomputed for k loop)

; r10d = d+h (precomputed for k loop)

; r11d = i+j (precomputed for k loop)

; r15d = m+n (precomputed for k loop)

; r13d = k loop BMI1 iterator

;

; Stack frame (72 bytes):

; [rsp+0] a [rsp+4] b [rsp+8] c [rsp+12] d

; [rsp+16] e [rsp+20] i [rsp+24] m [rsp+28] f

; [rsp+32] g [rsp+36] j [rsp+40] n [rsp+44] h

; [rsp+48] e_iter [rsp+52] i_iter [rsp+56] f_iter [rsp+60] g_iter

; [rsp+64] max_a

; ============================================================================

search:

push rbp

mov rbp, rsp

push rbx

push r12

push r13

push r14

push r15

sub rsp, 72

; Hoist canonical flag into r12d (constant for entire search)

mov r12d, dword [canonical]

; max_a: canonical limits corner a to N2/4 + 1 = 5, but the C version

; uses 12 for 4x4 (since corners must be smallest, max useful is ~12)

mov dword [rsp+64], 16

test r12d, r12d

jz .start

mov dword [rsp+64], 12

.start:

mov dword [rsp+0], 1

; ---- a loop (1 to max_a) ----

.loop_a:

mov eax, [rsp+0]

cmp eax, [rsp+64]

jg .done

; Initialize mask with just a

xor ebx, ebx

bts ebx, eax

mov dword [rsp+4], 1

; ---- b loop (1 to 16, skip if used) ----

.loop_b:

mov ecx, [rsp+4]

cmp ecx, 16

jg .next_a

bt ebx, ecx

jc .next_b

bts ebx, ecx ; mask = {a, b}

mov dword [rsp+8], 1

; ---- c loop (1 to 16, skip if used) ----

.loop_c:

mov ecx, [rsp+8]

cmp ecx, 16

jg .end_c

bt ebx, ecx

jc .next_c

; d = 34 - a - b - c

mov eax, MAGIC

sub eax, [rsp+0]

sub eax, [rsp+4]

sub eax, [rsp+8]

cmp eax, 1

jl .next_c

cmp eax, 16

jg .next_c

bt ebx, eax

jc .next_c

; Canonical: d > a

test r12d, r12d

jz .d_ok

cmp eax, [rsp+0]

jle .next_c

.d_ok:

mov [rsp+12], eax

; Mark c, d in mask

mov ecx, [rsp+8]

bts ebx, ecx

bts ebx, eax ; mask = {a,b,c,d}

; === e loop (BMI1 bit iteration) ===

mov r8d, ALL_BITS

andn r8d, ebx, r8d ; available = ALL_BITS & ~used

; Canonical: e > b -> mask out bits 0..b

test r12d, r12d

jz .e_ready

mov ecx, [rsp+4]

add ecx, 1

or edx, -1

shl edx, cl

and r8d, edx

.e_ready:

.loop_e:

test r8d, r8d

jz .done_e

tzcnt ecx, r8d

blsr r8d, r8d

mov [rsp+16], ecx ; save e

mov [rsp+48], r8d ; save e iterator

bts ebx, ecx ; mask |= {e}

; === i loop (BMI1 bit iteration) ===

mov r9d, ALL_BITS

andn r9d, ebx, r9d

.loop_i:

test r9d, r9d

jz .done_i

tzcnt ecx, r9d ; i

blsr r9d, r9d

; m = 34 - a - e - i (column 0 constraint)

mov eax, MAGIC

sub eax, [rsp+0]

sub eax, [rsp+16]

sub eax, ecx

cmp eax, 1

jl .loop_i

cmp eax, 16

jg .loop_i

; Check m not used (temp mask with i added)

mov edx, ebx

bts edx, ecx

bt edx, eax

jc .loop_i

; Canonical: m > a

test r12d, r12d

jz .m_ok

cmp eax, [rsp+0]

jle .loop_i

.m_ok:

mov [rsp+20], ecx ; save i

mov [rsp+24], eax ; save m

mov [rsp+52], r9d ; save i iterator

bts ebx, ecx ; mask |= {i}

bts ebx, eax ; mask |= {m}

; === f loop (BMI1 bit iteration) ===

mov r10d, ALL_BITS

andn r10d, ebx, r10d

.loop_f:

test r10d, r10d

jz .done_f

tzcnt ecx, r10d ; f

blsr r10d, r10d

mov [rsp+28], ecx ; save f

mov [rsp+56], r10d ; save f iterator

bts ebx, ecx ; mask |= {f}

; === g loop (BMI1 bit iteration) ===

mov r11d, ALL_BITS

andn r11d, ebx, r11d

.loop_g:

test r11d, r11d

jz .done_g

tzcnt ecx, r11d ; g

blsr r11d, r11d

; j = 34 - d - g - m (anti-diagonal)

mov eax, MAGIC

sub eax, [rsp+12]

sub eax, ecx

sub eax, [rsp+24]

cmp eax, 1

jl .loop_g

cmp eax, 16

jg .loop_g

; temp mask with g added, check j

mov edx, ebx

bts edx, ecx

bt edx, eax

jc .loop_g

; n = 34 - b - f - j (column 1)

mov esi, MAGIC

sub esi, [rsp+4]

sub esi, [rsp+28]

sub esi, eax

cmp esi, 1

jl .loop_g

cmp esi, 16

jg .loop_g

bts edx, eax ; temp |= {j}

bt edx, esi

jc .loop_g

; h = 34 - e - f - g (row 1, constant in k loop)

mov edi, MAGIC

sub edi, [rsp+16]

sub edi, [rsp+28]

sub edi, ecx

cmp edi, 1

jl .loop_g

cmp edi, 16

jg .loop_g

bts edx, esi ; temp |= {n}

bt edx, edi

jc .loop_g

; All pre-k checks passed - save state

mov [rsp+32], ecx ; g

mov [rsp+36], eax ; j

mov [rsp+40], esi ; n

mov [rsp+44], edi ; h

mov [rsp+60], r11d ; g iterator

; Mark g, j, n, h in main mask

bts ebx, ecx

bts ebx, eax

bts ebx, esi

bts ebx, edi

; mask = {a,b,c,d,e,i,m,f,g,j,n,h} (12 values)

; === k loop (BMI1, innermost) ===

; Precompute constants used in k loop body

mov r8d, [rsp+0]

add r8d, [rsp+28] ; r8d = a + f (for p = 34 - a - f - k)

mov r9d, [rsp+8]

add r9d, [rsp+32] ; r9d = c + g (for o = 34 - c - g - k)

mov r10d, [rsp+12]

add r10d, [rsp+44] ; r10d = d + h (for l = 34 - d - h - p)

mov r11d, [rsp+20]

add r11d, [rsp+36] ; r11d = i + j (for row 2 verify)

mov r15d, [rsp+24]

add r15d, [rsp+40] ; r15d = m + n (for row 3 verify)

; Precompute min_p to avoid canonical branch in k loop

mov r14d, 1

test r12d, r12d

jz .minp_set

mov r14d, [rsp+0]

inc r14d ; min_p = a + 1

.minp_set:

mov r13d, ALL_BITS

andn r13d, ebx, r13d ; available for k

.loop_k:

test r13d, r13d

jz .done_k

tzcnt ecx, r13d ; k

blsr r13d, r13d

; p = 34 - (a+f) - k

mov eax, MAGIC

sub eax, r8d

sub eax, ecx

cmp eax, 1

jl .loop_k

cmp eax, 16

jg .loop_k

; Temp mask: add k, check p

mov edi, ebx

bts edi, ecx

bt edi, eax

jc .loop_k

; Canonical: p > a (p >= min_p)

cmp eax, r14d

jl .loop_k

bts edi, eax ; temp |= {p}

; l = 34 - (d+h) - p

mov edx, MAGIC

sub edx, r10d

sub edx, eax

cmp edx, 1

jl .loop_k

cmp edx, 16

jg .loop_k

bt edi, edx

jc .loop_k

bts edi, edx ; temp |= {l}

; o = 34 - (c+g) - k

mov esi, MAGIC

sub esi, r9d

sub esi, ecx

cmp esi, 1

jl .loop_k

cmp esi, 16

jg .loop_k

bt edi, esi

jc .loop_k

; Verify row 2: i + j + k + l = 34

mov edi, r11d ; i + j

add edi, ecx ; + k

add edi, edx ; + l

cmp edi, MAGIC

jne .loop_k

; Verify row 3: m + n + o + p = 34

mov edi, r15d ; m + n

add edi, esi ; + o

add edi, eax ; + p

cmp edi, MAGIC

jne .loop_k

; *** FOUND A MAGIC SQUARE! ***

; ecx=k, eax=p, edx=l, esi=o

inc qword [total]

; Only store sample on first find

cmp qword [has_sample], 0

jne .loop_k

mov qword [has_sample], 1

; Store sample directly (register values first, then stack)

lea rdi, [sample]

mov [rdi+60], eax ; p

mov [rdi+56], esi ; o

mov [rdi+44], edx ; l

mov [rdi+40], ecx ; k

; Now eax/ecx/edx/esi are free as scratch

mov eax, [rsp+0]

mov [rdi], eax ; a

mov eax, [rsp+4]

mov [rdi+4], eax ; b

mov eax, [rsp+8]

mov [rdi+8], eax ; c

mov eax, [rsp+12]

mov [rdi+12], eax ; d

mov eax, [rsp+16]

mov [rdi+16], eax ; e

mov eax, [rsp+28]

mov [rdi+20], eax ; f

mov eax, [rsp+32]

mov [rdi+24], eax ; g

mov eax, [rsp+44]

mov [rdi+28], eax ; h

mov eax, [rsp+20]

mov [rdi+32], eax ; i

mov eax, [rsp+36]

mov [rdi+36], eax ; j

mov eax, [rsp+24]

mov [rdi+48], eax ; m

mov eax, [rsp+40]

mov [rdi+52], eax ; n

jmp .loop_k

.done_k:

; Unmark g, j, n, h from mask

mov ecx, [rsp+32]

btr ebx, ecx

mov ecx, [rsp+36]

btr ebx, ecx

mov ecx, [rsp+40]

btr ebx, ecx

mov ecx, [rsp+44]

btr ebx, ecx

; Restore g iterator

mov r11d, [rsp+60]

jmp .loop_g

.done_g:

; Unmark f

mov ecx, [rsp+28]

btr ebx, ecx

; Restore f iterator

mov r10d, [rsp+56]

jmp .loop_f

.done_f:

; Unmark i, m

mov ecx, [rsp+20]

btr ebx, ecx

mov ecx, [rsp+24]

btr ebx, ecx

; Restore i iterator

mov r9d, [rsp+52]

jmp .loop_i

.done_i:

; Unmark e

mov ecx, [rsp+16]

btr ebx, ecx

; Restore e iterator

mov r8d, [rsp+48]

jmp .loop_e

.done_e:

; Unmark c, d

mov ecx, [rsp+8]

btr ebx, ecx

mov ecx, [rsp+12]

btr ebx, ecx

.next_c:

inc dword [rsp+8]

jmp .loop_c

.end_c:

; Unmark b

mov ecx, [rsp+4]

btr ebx, ecx

.next_b:

inc dword [rsp+4]

jmp .loop_b

.next_a:

inc dword [rsp+0]

jmp .loop_a

.done:

add rsp, 72

pop r15

pop r14

pop r13

pop r12

pop rbx

pop rbp

ret

Generic QBE Low-Level Output for Portability

While emitting code for a macro-assembler is an interesting party trick, it’s not portable at all, so not useful beyond very predictable environments.

QBE (Quick Back End) on the other hand is a simple intermediate language that’s not processor specific. It can perform 70% or so of the optimizations LLVM does and is much lighter-weight. So in principle an LLM could produce QBE code directly from a prompt and you could translate to Intel or AMD machine code and make a Linux binary from it.

This way, you’re not locking yourself to the specific hardware you build on. It should be a bit easier to debug too.

To help Claude Code, I did a bit of research on QBE and pasted some known good code for simple programs into a file I called qbe_prompt.md. I explained how to build QBE and how to build and link QBE programs and asked it to read and construct its own summary after building the QBE utility. It prepared the CLAUDE.md and the output ended like this:

- QBE built from source → ~/.local/bin/qbe

- Full pipeline verified: .ssa → QBE → .s → CC → binary → runs

Project files created:

- CLAUDE.md — comprehensive QBE IL reference (sigils, types, control flow, memory, calling convention, comparisons, conversions, aggregate types, linking, common pitfalls). This is loaded automatically every conversation.

- build.sh — build+run script. Usage: ./build.sh prog.ssa [helpers.o] [-lm] [-lpng], set RUN=1 to execute.

- hello.ssa — smoke test (verified working)

- examples/c_interop/ — demonstrates linking QBE with a C .o file (verified working)

C interop approach for linking any .o:

1. Compile C to .o with cc -c file.c -o file.o

2. In QBE, just call $function_name(...) — any $ symbol resolves at link time

3. Pass the .o to build.sh alongside the .ssa

For graphics/image output, the plan is:

- Simple cases: write raw PPM directly from QBE using $fopen/$fwrite (zero deps)

- PNG/complex formats: write a thin C wrapper around libpng or stb_image_write, compile to .o, link it

Ready to write QBE programs. What would you like to build first?

Next, I gave a ridiculously generic prompt and with no additional prompting, got a perfectly working and well-performing QBE program back:

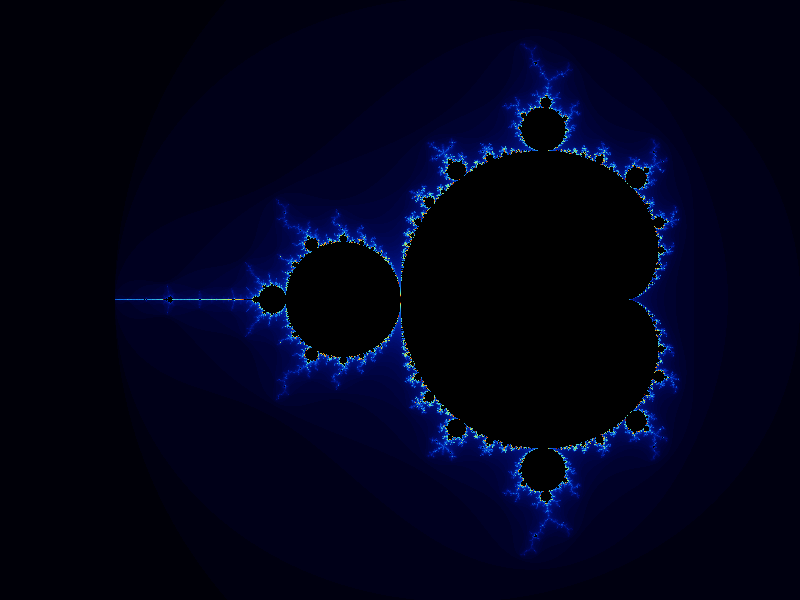

❯ Make a mandelbrot set generator that outputs a BMP or other lossless format.

● It works. Here's what was built:

mandelbrot.ssa — 100% QBE IL, no C code, no external libraries:

- 800x600 24-bit BMP output in 53ms

- Classic Mandelbrot view: real [-2.5, 1.0], imag [-1.3125, 1.3125]

- 256 max iterations

- Smooth polynomial color palette (blue/orange tones)

- Writes valid BMP directly using fopen/fwrite/fclose from libc

Structure (4 functions):

- $iterate — core Mandelbrot escape loop using SSA phi nodes

- $color — maps iteration count to BGR bytes via polynomial palette

- $write_header — builds 54-byte BMP header on the stack, writes it

- $main — opens file, iterates over all pixels row-by-row, writes BMP

Build and run: RUN=1 ./build.sh mandelbrot.ssa

On the first shot it rendered a nice view:

Then I prompted like this to check the relative quality of the QBE output:

❯ As a comparison to the gcc back end output, can we make a similar program in C that uses the same algorithmic approach and makes the same format of output and image as much as possible? This will give a ball-park estimate of the quality of the generated QBE code and the Claude Code ability to directly generate it vs the gcc back-end optimizations and the C compiler.

⎿ === Mandelbrot Benchmark (800x600, 256 iter, 20 runs) ===

QBE (hand-written IL) min= 49 ms median= 50 ms max= 52 ms

… +4 lines (ctrl+o to expand)

⎿ (timeout 2m)

● Now verify the images are pixel-identical.

● Bash(./mandelbrot_qbe && mv mandelbrot.bmp mandelbrot_qbe.bmp && ./mandelbrot_c_O2 && python3 -c "

a = open('mandelbrot_qbe.bmp','rb').read()…)

⎿ QBE size: 1440054, GCC size: 1440054

Headers match: True

Files are BYTE-IDENTICAL

● Excellent. Here are the results:

Benchmark: Mandelbrot 800x600, 256 iterations, 20 runs

┌───────────────────────┬────────┬─────────────────────┐

│ Build │ Median │ vs QBE │

├───────────────────────┼────────┼─────────────────────┤

│ QBE (hand-written IL) │ 50 ms │ baseline │

├───────────────────────┼────────┼─────────────────────┤

│ GCC -O0 │ 102 ms │ QBE is 2x faster │

├───────────────────────┼────────┼─────────────────────┤

│ GCC -O2 │ 39 ms │ GCC is 1.28x faster │

├───────────────────────┼────────┼─────────────────────┤

│ GCC -O3 │ 39 ms │ GCC is 1.28x faster │

├───────────────────────┼────────┼─────────────────────┤

│ GCC -O3 -march=native │ 43 ms │ GCC is 1.16x faster │

└───────────────────────┴────────┴─────────────────────┘

Output: Byte-identical BMP files — same headers, same pixels.

Takeaways:

- QBE-compiled code is 2x faster than unoptimized GCC (-O0), showing QBE's backend does meaningful register allocation and instruction selection even on "hand-written" IL.

- GCC -O2/-O3 beats QBE by ~28%, which is expected — GCC does loop unrolling, SIMD, constant propagation, and inlining that QBE doesn't attempt. QBE is designed to be a simple, fast-compiling backend, not an aggressive optimizer.

- Interestingly -O3 -march=native is slightly slower than plain -O3 here — possibly auto-vectorization overhead on this particular workload with the branch-heavy escape loop.

- The fact that Claude-authored QBE IL lands between -O0 and -O2 on the first attempt with no manual tuning is a solid result.

It would be pointless to try to make the fastest magic square search with QBE. I picked the Mandelbrot as a test to see if a sort of normal small program would at least be reasonably close in performance to the same thing in generic C with the standard compiler and back end, and it was.

If you’re curious, here’s the QBE program:

--- click here to see the QBE code ---

# mandelbrot.ssa — Mandelbrot set to 24-bit BMP file

#

# Image: 800x600, region: real [-2.5, 1.0], imag [-1.3125, 1.3125]

# Max iterations: 256

# Output: mandelbrot.bmp

data $filename = { b "mandelbrot.bmp", b 0 }

data $mode = { b "wb", b 0 }

# Mandelbrot escape iteration

# Returns iteration count when |z|² > 4, or max_iter if never escapes

function w $iterate(d %cr, d %ci, w %max_iter) {

@start

jmp @head

@head

%i =w phi @start 0, @body %i1

%zr =d phi @start d_0, @body %zr1

%zi =d phi @start d_0, @body %zi1

%done =w ceqw %i, %max_iter

jnz %done, @ret_max, @check

@check

%zr2 =d mul %zr, %zr

%zi2 =d mul %zi, %zi

%mag2 =d add %zr2, %zi2

%esc =w cgtd %mag2, d_4.0

jnz %esc, @ret_i, @body

@body

# z_new = z² + c

# zr1 = zr² - zi² + cr

%diff =d sub %zr2, %zi2

%zr1 =d add %diff, %cr

# zi1 = 2·zr·zi + ci

%prod =d mul %zr, %zi

%prod2 =d add %prod, %prod

%zi1 =d add %prod2, %ci

%i1 =w add %i, 1

jmp @head

@ret_max

ret %max_iter

@ret_i

ret %i

}

# Map iteration count to a BGR color triple, stored at %dst (3 bytes)

# Uses smooth polynomial palette:

# R = 9·(1-t)·t³·255

# G = 15·(1-t)²·t²·255

# B = 8.5·(1-t)³·t·255

# where t = iter/max_iter

function $color(l %dst, w %iter, w %max_iter) {

@start

%in_set =w ceqw %iter, %max_iter

jnz %in_set, @black, @calc

@black

storeb 0, %dst

%dst1 =l add %dst, 1

storeb 0, %dst1

%dst2 =l add %dst, 2

storeb 0, %dst2

ret

@calc

%fi =d swtof %iter

%fm =d swtof %max_iter

%t =d div %fi, %fm

%omt =d sub d_1.0, %t

# t² and t³

%t2 =d mul %t, %t

%t3 =d mul %t2, %t

# (1-t)², (1-t)³

%omt2 =d mul %omt, %omt

%omt3 =d mul %omt2, %omt

# R = 9 * omt * t³ * 255

%r0 =d mul d_9.0, %omt

%r1 =d mul %r0, %t3

%r2 =d mul %r1, d_255.0

%ri =w dtosi %r2

# G = 15 * omt² * t² * 255

%g0 =d mul d_15.0, %omt2

%g1 =d mul %g0, %t2

%g2 =d mul %g1, d_255.0

%gi =w dtosi %g2

# B = 8.5 * omt³ * t * 255

%b0 =d mul d_8.5, %omt3

%b1 =d mul %b0, %t

%b2 =d mul %b1, d_255.0

%bi =w dtosi %b2

# BMP pixel order is BGR

storeb %bi, %dst

%dg =l add %dst, 1

storeb %gi, %dg

%dr =l add %dst, 2

storeb %ri, %dr

ret

}

# Write 54-byte BMP header to file

function $write_header(l %fp, w %w, w %h) {

@start

%hdr =l alloc4 54

# --- File header (14 bytes) ---

# Signature 'B','M'

storeb 66, %hdr

%o1 =l add %hdr, 1

storeb 77, %o1

# File size = 54 + row_size * height

# row_size = (w*3 + 3) & ~3

%wb =w mul %w, 3

%wb3 =w add %wb, 3

%rowsz =w and %wb3, -4

%imgsz =w mul %rowsz, %h

%fsz =w add %imgsz, 54

%o2 =l add %hdr, 2

storew %fsz, %o2

# Reserved = 0

%o6 =l add %hdr, 6

storew 0, %o6

# Pixel offset = 54

%o10 =l add %hdr, 10

storew 54, %o10

# --- DIB header (BITMAPINFOHEADER, 40 bytes) ---

%o14 =l add %hdr, 14

storew 40, %o14

# Width, height

%o18 =l add %hdr, 18

storew %w, %o18

%o22 =l add %hdr, 22

storew %h, %o22

# Planes = 1 (16-bit)

%o26 =l add %hdr, 26

storeh 1, %o26

# Bits per pixel = 24 (16-bit)

%o28 =l add %hdr, 28

storeh 24, %o28

# Compression = 0

%o30 =l add %hdr, 30

storew 0, %o30

# Image size

%o34 =l add %hdr, 34

storew %imgsz, %o34

# X/Y pixels per meter = 0

%o38 =l add %hdr, 38

storew 0, %o38

%o42 =l add %hdr, 42

storew 0, %o42

# Colors used = 0, important = 0

%o46 =l add %hdr, 46

storew 0, %o46

%o50 =l add %hdr, 50

storew 0, %o50

# Write header to file

%n =l call $fwrite(l %hdr, l 1, l 54, l %fp)

ret

}

export function w $main() {

@start

# Image dimensions

%width =w copy 800

%height =w copy 600

# Complex plane bounds

# real: [-2.5, 1.0], imag: [-1.3125, 1.3125]

%re_min =d copy d_-2.5

%re_max =d copy d_1.0

%im_min =d copy d_-1.3125

%im_max =d copy d_1.3125

%max_iter =w copy 256

# Compute scale factors

# scale_x = (re_max - re_min) / width

# scale_y = (im_max - im_min) / height

%re_range =d sub %re_max, %re_min

%im_range =d sub %im_max, %im_min

%fw =d swtof %width

%fh =d swtof %height

%sx =d div %re_range, %fw

%sy =d div %im_range, %fh

# Row size = (width*3 + 3) & ~3

%wb =w mul %width, 3

%wb3 =w add %wb, 3

%rowsz =w and %wb3, -4

# Allocate row buffer

%rowsz_l =l extsw %rowsz

%rowbuf =l call $malloc(l %rowsz_l)

# Open output file

%fp =l call $fopen(l $filename, l $mode)

%null =w ceql %fp, 0

jnz %null, @err, @opened

@err

ret 1

@opened

# Write BMP header

call $write_header(l %fp, w %width, w %height)

# BMP stores rows bottom-to-top, so y goes from height-1 to 0

# But we iterate y=0..height-1 and map: imag = im_max - y * sy

# (im_max is top of image, which is first pixel row in BMP bottom-up)

# Actually for BMP bottom-up: row 0 in file = bottom of image

# We want bottom of image = im_min, top = im_max

# So row y (0-indexed from bottom) maps to: im = im_min + y * sy

jmp @row_head

@row_head

%y =w phi @opened 0, @row_next %y1

%cmp_y =w csltw %y, %height

jnz %cmp_y, @row_body, @done

@row_body

# ci = im_min + y * sy

%fy =d swtof %y

%ci =d mul %fy, %sy

%ci2 =d add %im_min, %ci

# Zero the padding bytes in the row buffer

# (just zero the whole row for simplicity)

%rs_l =l extsw %rowsz

call $memset(l %rowbuf, w 0, l %rs_l)

jmp @col_head

@col_head

%x =w phi @row_body 0, @col_next %x1

%cmp_x =w csltw %x, %width

jnz %cmp_x, @col_body, @write_row

@col_body

# cr = re_min + x * sx

%fx =d swtof %x

%cr =d mul %fx, %sx

%cr2 =d add %re_min, %cr

# Compute mandelbrot iteration

%iter =w call $iterate(d %cr2, d %ci2, w %max_iter)

# Compute pixel offset in row buffer: x * 3

%off =w mul %x, 3

%off_l =l extsw %off

%pix =l add %rowbuf, %off_l

# Write color

call $color(l %pix, w %iter, w %max_iter)

jmp @col_next

@col_next

%x1 =w add %x, 1

jmp @col_head

@write_row

# Write row to file

%wr =l call $fwrite(l %rowbuf, l 1, l %rs_l, l %fp)

jmp @row_next

@row_next

%y1 =w add %y, 1

jmp @row_head

@done

call $fclose(l %fp)

call $free(l %rowbuf)

ret 0

}

The Final Programming Language

Thinking back to the 1980’s once again, I remember several products marketed as human to machine code translators: That is, English in, executable binaries out. Did they actually work well? No. Now we may have finally achieved that dream. Well, at least compared to the capabilities of these 1980s attempts.

There was something called “The Last One” from 1981 which is kind of a precient name, and a name way ahead of its capabilities obviously. They marketed it as “The last program you’ll ever need to buy.” It claimed to take your menu selections and rewrite them into BASIC code.

Another product I read about at the time in Compute! was “Plain English” which tried to compile English instructions into efficient code. It also featured in a few “On the Scene”columns in 1984 Nibble Magazine articles. I can’t point to the exact pages – in some cases they weren’t digitized, and they are hard to search in any case. There was an InfoWorld review in 1984 which I can’t find either. Check out that Compute! archive. It’s fun reading.

Tracking down online references from the 1980s proved pretty difficult. This is a case where my memory isn’t much worse than Google results. Gemini knows more (or so it says,) but utterly fails to provide correct references.

Anyway, I thought it was an interesting contrast to see how far we’ve come.

Parting Thoughts

In a sense, if LLM coding models + agents can emit working assembly code, you have eliminated the need to buy or acquire any other programs, even C compilers.

Actually constructing software this way isn’t a great idea. For one thing the assembly code has a lot of tokens per high-level instruction, so your LLM will hit its context limit quickly when reviewing the code. Which you’ll need to do constantly since it’s so hard to read.

Still, in theory you could bootstrap your way to making a mostly standards compliant C compiler in assembly that you could build an actual good compiler on top of if all you had was access to an LLM chat session and an nearly empty computer with nothing but a bare Linux install.